Machine Learning in Flutter

So, I know Machine Leaning is there in open and it has been there for quite long. It goes way back in the previous century when it was first coined and brought into existence, now you find it everywhere in daily life so important that your life is probably surrounded by it and you may even not know, consider your smartphones they are called “smart” for a particular reason and in today’s time they are “smartest” while getting even more n more from day to day, because they are learning about you from you itself the more you’re using it the more your smartphone gets to know you and all of that is just the machine with lines of code evolving with time like humans. It’s not just limited to smartphones, it’s extensive in technology.

But talking about smartphones consisting of apps which makes your life easier on a daily basis, using Machine Learning is a smarter way to build apps and with Flutter building cross-platform apps have been fun for a while and what if you are able to build an intelligent app with fun using Flutter? Don’t worry we got you sorted and we got it from basics giving you an overview step-by-step which will help you build Flutter apps using Machine Learning.

Machine Learning overview:

Field of study that gives computers the capability to learn without being explicitly programmed.

In a laymen language, one must define Machine Learning as the scientific study of statistical models and algorithms that a computer uses to effectively perform specific tasks without having to provide explicit instructions. It is an application of Artifical Intelligence that enables a system to learn and improvise through experience, where a system can collect the data and learn from it.

What does Learning mean to a Computer?

A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P if its performance at tasks in T, as measured by P, improves with experience E.

For example: Take an example of playing chess

Here,

E = the experience gained from playing chess

T = the task of playing chess

P = probability of winning the next game

Examples of implementation of machine learning in real life:

You know the general practice to understand something or making someone understand a thing is always try exploring its real-time use in your life. So, following that practice lets us consider some real-life scenarios where one come across Machine Learning in day to day basis.

1. Google photos, we guess everyone is aware of this app on your phone made and maintained by Google, one special and an excellent feature that it practice is the use of machine learnings Face Recognition algorithm in its app. It helps to recognize the people in your photos based on their facial identification. Never encountered it? Head over to the Photos app and experience it yourself.

2. Google Lens, released in the second half of 2017, this Google app helps image recognition in real time, just hover your camera to an image or ask google assistant to scan an image using this app to get insightful information extracted from the image. Be it taking an action in the text like saving the contact, fetching the e-mail ID or finding a dress you like in the image over the web or exploring the places nearby or identify plants and animals. This app makes you explore smartly giving you the futuristic feel and all of this is possible because of one thing i.e. Machine Learning.

3. Shopping Online, This is another most come practiced implementation of Machine Learning. Did you ever notice that when you’re trying to buy a product from an online platform frequently but you didn’t buy it somehow be it because of any reason then your social media like Facebook, your webpages or even the shopping store start recommending you that particular product? This is all possible not because of some human being sitting on a desk and pushing you these adverts NO, in fact, this is Machine Learning algorithms that does it.

4. Medical Enhancements, Yes!, Machine Learning plays a crucial role in the medical field too. Nowadays, the detection of various disease is done just by looking at the slide(cell image) and all of this is performed by machines using the model’s scientists have developed, for something which humans would take a significant amount of time.

And frankly speaking this list of examples is quite long, just imagine anything that learns from its past experience and grows in time.

Firebase and Machine Learning:

So, that was just an overview of Machine Learning giving you a brief about What is Machine Learning? and Where do you come across it in a day to day activity? Well, don’t worry we didn’t lose the track of our objective here which by the way is to get familiar with Machine Learning in Flutter. Heading forward with our objective the next topic to explore is about Firebase. We will start with why we have to explore Firebase here.

Firebase is Google’s mobile and web app development platform that provides developers with a plethora of tools and services to help them develop high-quality apps usually by providing a backend to the app. Most developers use it to create a database to their apps as it helps build apps faster without having to take care of the managing infrastructure.

We will be using firebase because of Firebase ML kit which is a mobile SDK that brings Google’s machine learning expertise to Android and iOS apps in a powerful yet easy-to-use package. Whether you’re new or experienced in machine learning, you can implement the functionality you need in just a few lines of code. There’s no need to have deep knowledge of neural networks or model optimization to get started. On the other hand, if you are an experienced ML developer, ML Kit provides convenient APIs that help you use your custom TensorFlow Lite models in your mobile apps.

Main highlights of Firebase ML kit:

1. Production ready:

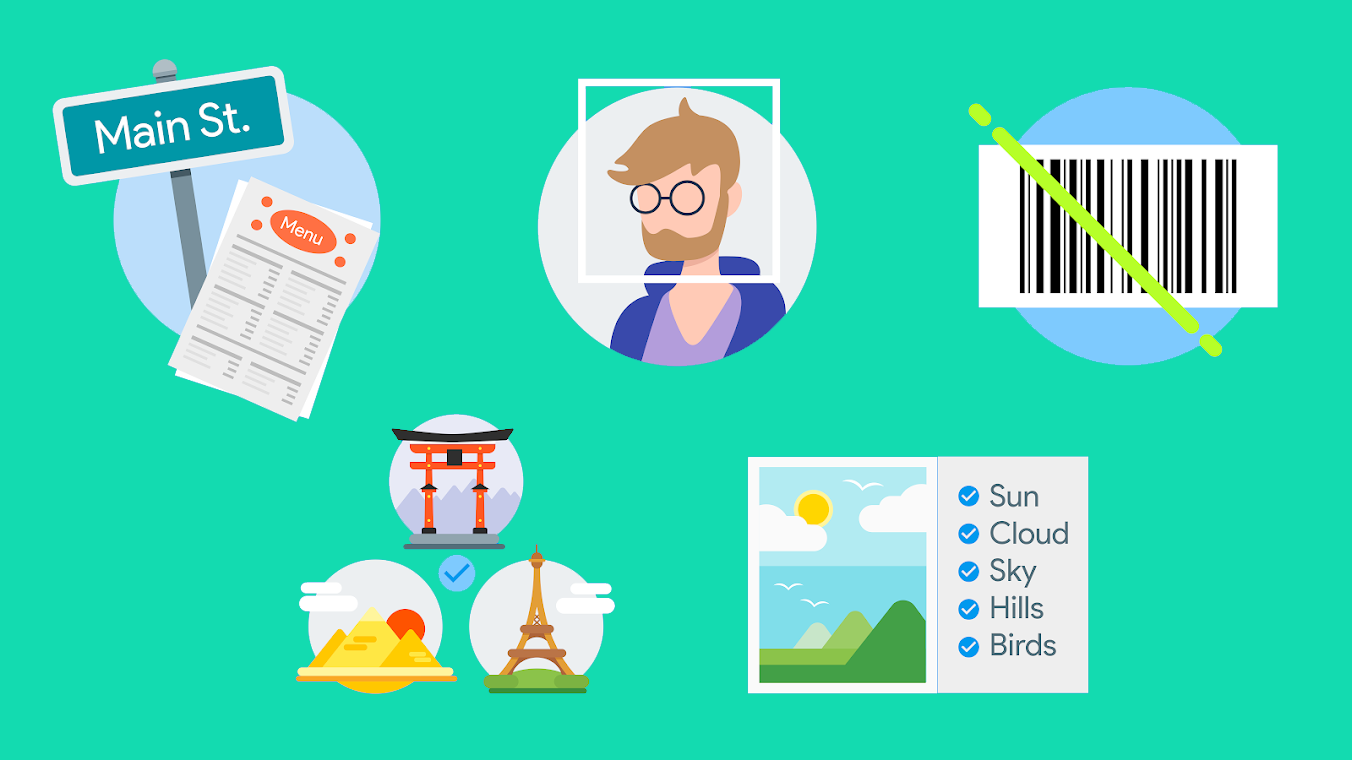

Firebase ML Kit comes with a set of ready to use APIs such as Image recognition, Text recognition, face detection, land identification, barcode scanning, image labeling, and language identification. You just have to use the libraries and it feeds you with the information you need.

2. Importing Custom Model:

Suppose you have your own models of ML that you have made in Tensor Flow, don’t worry Firebase got you all covered making it easy for you by letting you import your Tensor flow lite models, just upload your models in firebase and firebase will take care of hosting and serving it to you. Firebase acts as the API layer to your custom model.

3. Cloud or On-device:

Whether you want to use it for cloud services or on-device, firebase ML kit works smoothly, securely and efficiently everywhere. Their APIs works both on the cloud and as well as on-device. On-device it even works when there are network issues while on-cloud it is powered by Google’s cloud platform machine learning technology.

Work Methodology:

So, you must be wondering how does all of this even works and if that is the case trust us, we were in the same position as you are right now until the moment we started to dig and it came out all as easy as pie, ML kit implements Google’s Machine Learning Technology, such as Google Cloud Vision API, TensorFlow lite and Android Neural Network API in a single SDK. Now just imagine the power of all these technologies under one hood; whether you want to hold the power of cloud processing or be it the real-time potential of mobile-optimized models or even your custom models, all of it is just handled in few lines of code.

Implementation:

The path of implementation includes just three steps, yes! just three:

1. SDK integration:

Integrate the SDK using gradle.

2. Prepare input data:

Let us explain it with an example, if you’re using a vision feature, capture an image from the camera and generate the necessary metadata such as image rotation, or prompt the user to select a photo from their gallery.

3. Apply the ML model to your data:

Using the ML model to your data, you can generate a wide spectrum of insights such as the emotional state of detected faces or the objects and concepts that were recognized in the image, depending on the feature you used. Then you can implement these insights to power features in your app like photo embellishment, automatic metadata generation.

Various ready-to-use APIs:

1. Text Recognition:

By using ML Kit’s text recognition API you can identify text in any Latin-based languages which in general sense means almost all languages. It can maneuver the tedious data by itself and by using the cloud-based API you can even extract text from the picture of documents and also apps can track real-time objects.

2. Face Detection:

By using ML Kit’s face detection API you can detect faces from an image and identify their key facial features like locate eyes, nose, ears, cheeks, mouth and even get contours of the detected faces. The information collected through this API can help embellish your selfies like when you want to add beauty to your face in real-time and help capture portraits in portrait mode. It helps perform face detection in real-time so you can use it to generate emojis or add filters in a video call or snap.

3. Barcode scanning:

By using ML Kit’s barcode scanning API you can scan the barcodes to get the encoded data. Barcodes are a convenient way to pass information of the real world to your app. It can encode structured data such as wifi credentials or contact information.

4. Image Labeling:

By using ML kit’s image labeling API you can recognize various entities in an image without having to provide any additional metadata from your side either using on-device or cloud-based API. Basically, image labeling gives you an insight about the content in your image. When you use this API you get the list of entities that were recognized such as people, places, activities and more. Each label comes with a score that mentions the confidence level of the ML model on its observation.

5. Landmark Recognition:

By using landmark recognition API you can recognize the popular landmarks in the image provided to the API by you. After passing the image to it, you get the landmarks that were recognized in the image, along with the coordinates and the place where the landmark is located.

6. Language Identification:

By using language identification API you can determine the language from the given text. This is helpful when working with user-provided text where the information about the language isn’t provided.

Integrating Machine Learning Kit in Flutter:

Phewww! That was a lot of background knowledge but NO that was not to just to spam it with words, in fact, all of that was to give you an overview of Machine Learning and Firebase’s ML Kit and if you were already aware of them then you know how crucial it was to know before moving any further.

But finally, we’re at the part where we integrate Firebase’s ML Kit with Flutter and its just a piece of cake now onwards.

1. Installing plugins:

The basic requirement is to install a Flutter’s ML vision plugin which has been built by the flutter dev team itself and to explore more from them click here. You can use a couple of more plugins depending on your requirement like if you gonna use the Image identification then use image picker plugin which makes you choose either from clicking an image using a camera or get an image from the gallery.

2. Setup the firebase project:

If you are already know how to setup a firebase project then you are good to go for this step and if you aren’t aware of it then below is a video which will help you setup a firebase project using Firebase’s Cloud Firestore over Firebase Real-Time database.

To know about Cloud Firestore, watch

Now, you’re done and good to go with the fundamental requirements and knowledge required to use Machine Learning in Flutter. You can use the various APIs that are available with Firebase ML Kit to get your work done. On this blog, we won’t be discussing the codelab for Machine Learning but we’ll be bringing a codelab in our next blog so stay tuned. This blog was to give you an overview of Machine Learning, Firebase ML Kit and Flutter integration.

FlutterDevs team of Flutter developers to build high-quality and functionally-rich apps. Hire flutter developer for your cross-platform Flutter mobile app project on hourly or full-time basis as per your requirement! You can connect with us on Facebook and Twitter for any flutter related queries.

Post a Comment

You must be logged in to post a comment.

Marco

December 5, 2020I would like to train a model to recognize images of artworks which therefore have a well-defined pattern. Libraries like ArCore, ArKit or even the Vuforia and Wikitude SDKs offer the ability to use a single image per artwork. Can ML Kit be used in a similar way?